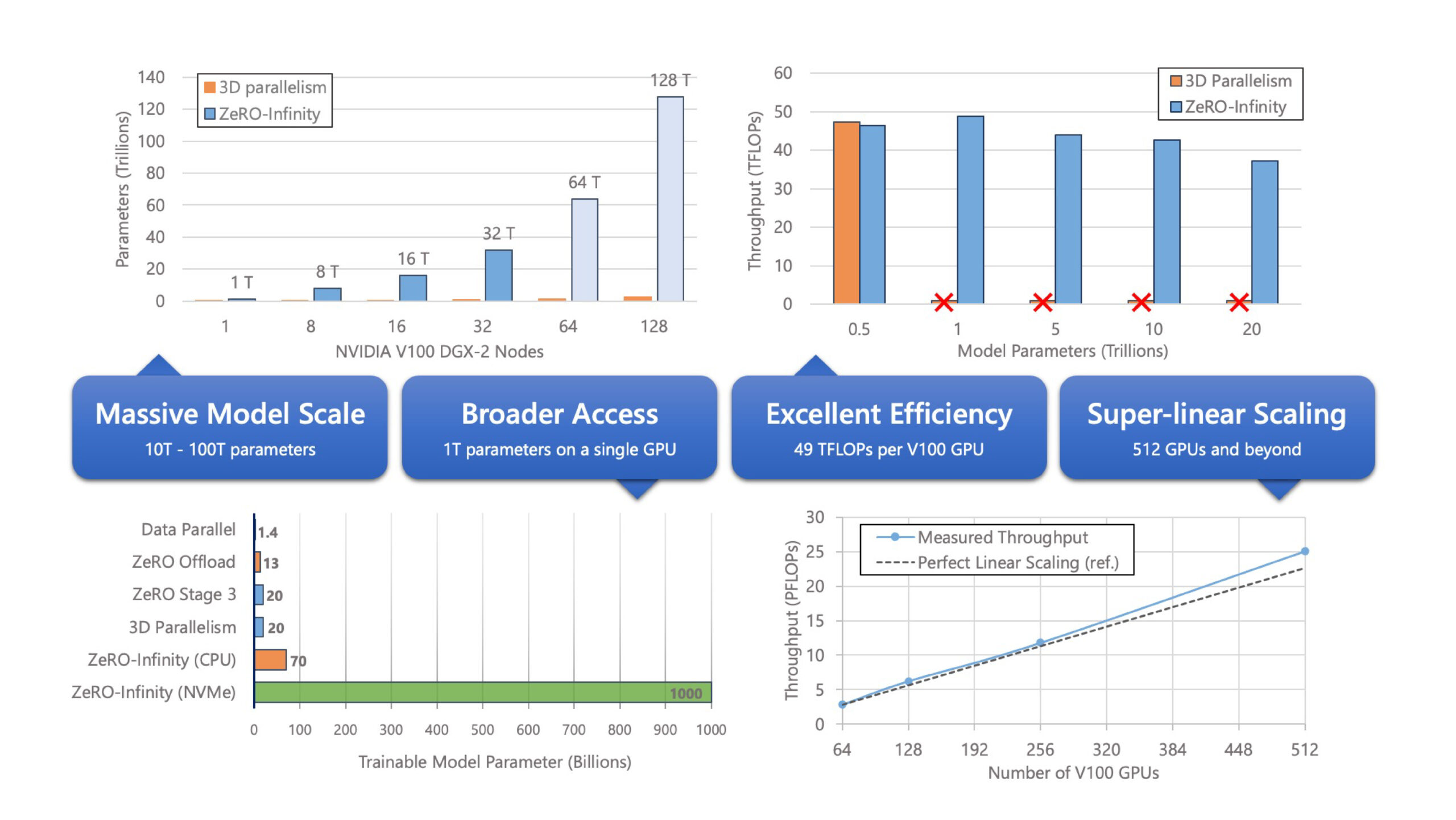

ZeRO-Infinity and DeepSpeed: Unlocking unprecedented model scale for deep learning training - Microsoft Research

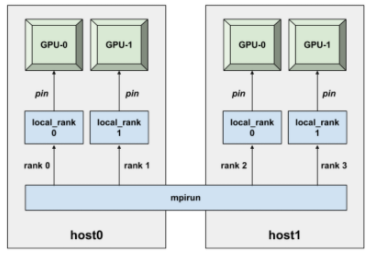

Learn PyTorch Multi-GPU properly. I'm Matthew, a carrot market machine… | by The Black Knight | Medium

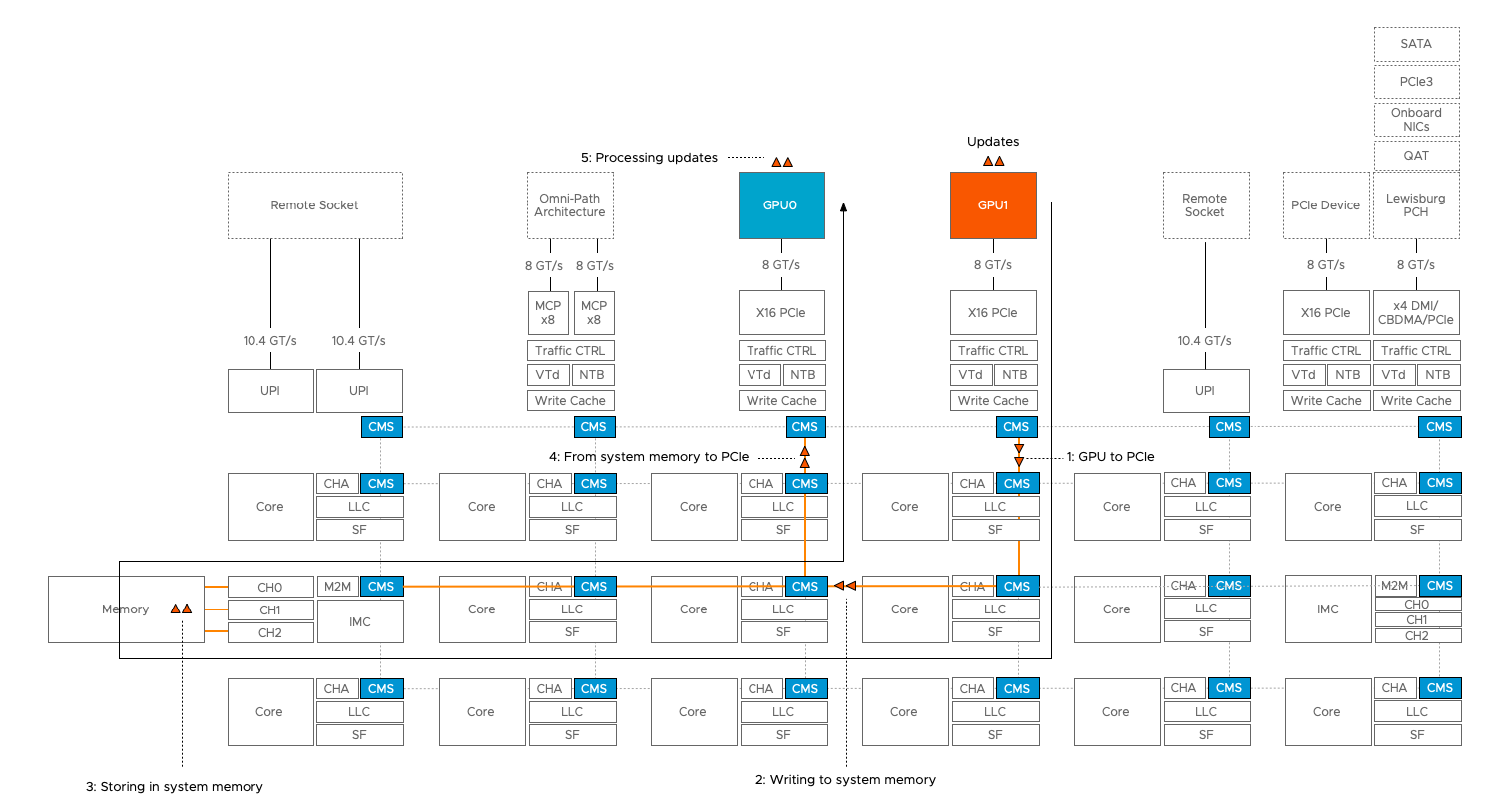

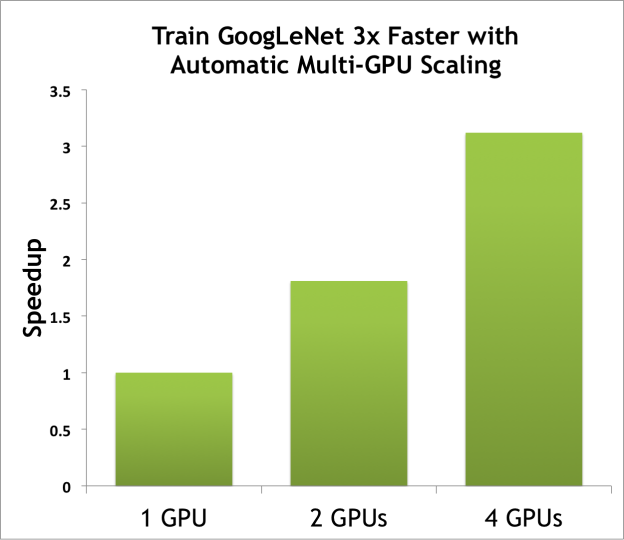

NVIDIA AI Developer auf Twitter: "Great news for #deeplearning developers, NCCL 2.3 is now open source and the latest release offers high-performance and efficient multi-node, multi-GPU scaling for deep learning training. https://t.co/QiiYKOBUb1